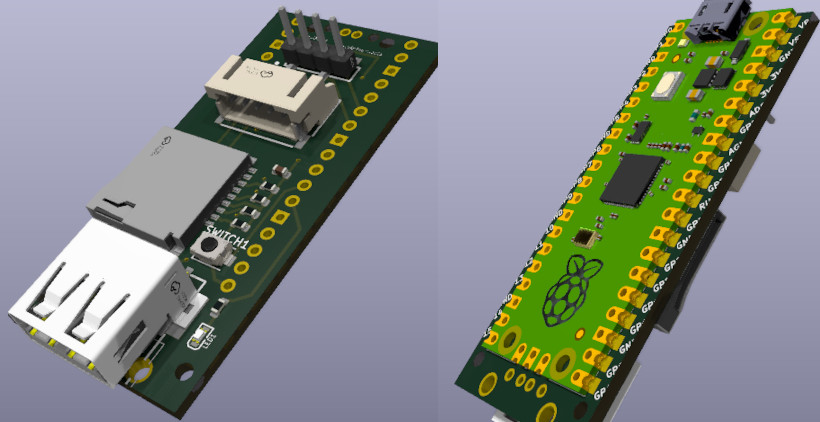

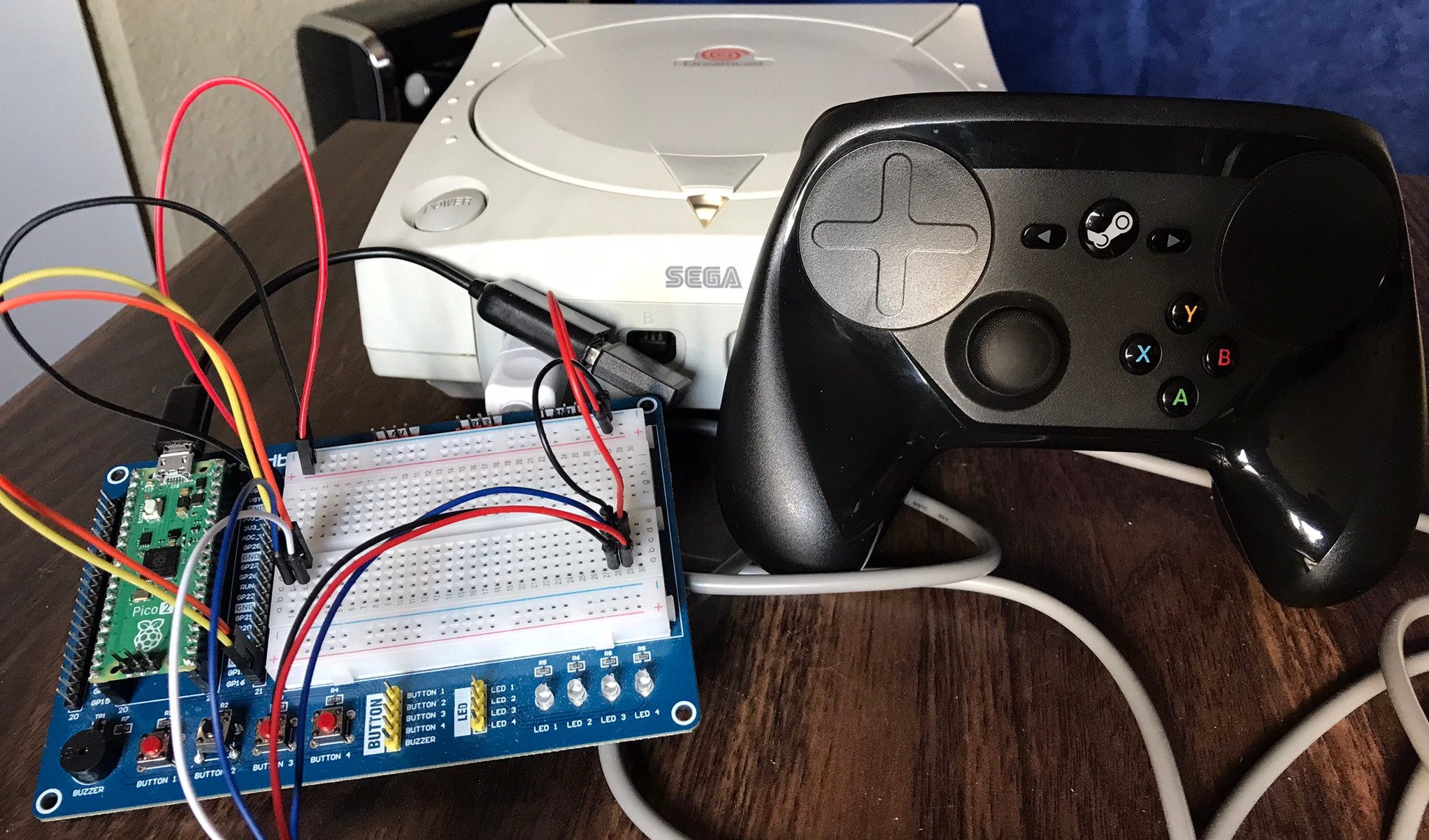

An often requested feature for Pico2Maple (a USB/Bluetooth to Sega Dreamcast adapter) is the option to run it on the RP2040 chip (original Pi Pico) in addition to the RP2350 chip (Pico 2). The RP2040 is much more common for projects similar to Pico2Maple, and boards based on the RP2040 tend to be a little bit cheaper than equivalent RP2350 versions.

So, recently, I wanted to make an earnest attempt to port Pico2Maple's firmware to run on the RP2040 chip. Overall, it turned out to be a pretty straightforward process. There were a couple hurdles, which I talk about here, but everything else just sort of worked for the most part.

Here, I wanted to discuss some of the changes that were required to port Pico2Maple's firmware to run on the RP2040.

PIO Pin Masking

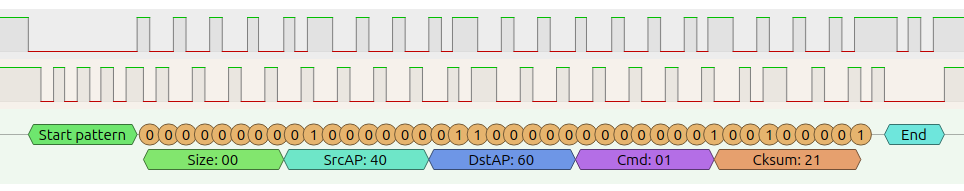

The RP series of chips have a cool hardware feature called programmable input/output (PIO). These are like little tiny co-processors that can run at a fixed clock rate. Each PIO block has 4 state machines, each with a few registers, and access to a shared space of up-to 32 instructions from a very limited set of instructions.

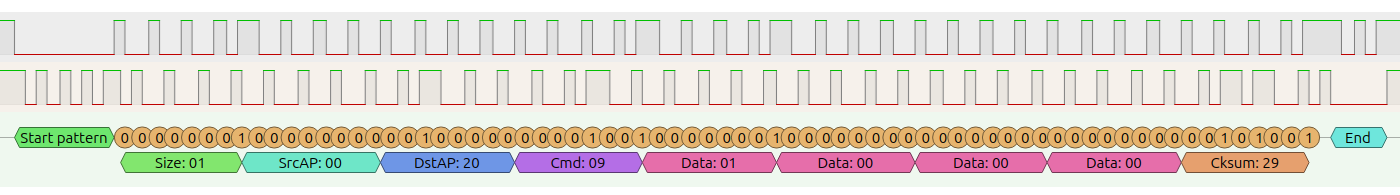

The maple_rx PIO program in Pico2Maple uses a new pin masking feature that is only available on the RP2350. When a PIO program reads pins into a register using the mov instruction, it will normally read the all 32 pins starting at the user-defined base pin. This makes it hard to compare pin reads directly because the unused pins will fill the register with garbage data. The masking feature causes only the desired pins to actually be read into the register, the rest of the bits will be set to '0'.

Pico2Maple reads the Maple bus by continuously reading both Maple data pins and waiting for either of them to change, and taking some action depending on which pin changed and in which direction.

Recreating the masking behaviour on the RP2040 turned out to be relatively simple, albeit at the cost of a couple extra PIO instructions.

; Using masking

mov x pins ; Read pins with masking, only the first 2 bits will have data

Becomes:

; Without masking

mov x pins ; Read pins w/o masking, 2 bits of real data with 30 bits of garbage

mov osr x ; Copy register into output shift register

out x 2 ; Shift out 2 bits into register x, recreating the masking behaviour

So, one problem solved.

Lack of PIO Blocks

Pico2Maple uses all 3 PIO blocks available in the RP2350. Both of the maple_rx and maple_tx PIO programs are close to the 32 instruction limit, so they were each given a dedicated PIO block. Since each PIO block has 4 state machines available, it would be theoretically possible to support 4 controllers at once from a single Pico 2. However, this never got implemented and the whole Maple stack continued to use 2 full PIO blocks. The third PIO block on the RP2350 is used by the wireless chip driver to run a SPI interface to the CYW43 chip.

However, this whole PIO setup poses a problem for the RP2040 since it has only 2 PIO blocks available. My first attempt to get around this was to try and reduce the instruction count of one of the Maple PIO programs, so that it could share the PIO block with the PIO-SPI program used to communicate with the wireless chip. Multiple programs can share the same PIO block, as long as their combined instruction count is under the 32 instruction limit, and use separate state machines. This sort of worked, both were able to run from the same block, but Bluetooth activity was causing a lot of crashes. My thought was that because the same PIO block was being managed on two separate MCU cores (Bluetooth on core 0, Maple on core 1) that there were some concurrency issues.

Rather than trying to work around that, I took another approach of using only 1 PIO block for all the Maple stuff and dynamically swapping out the maple_rx and maple_tx programs when needed. This left the second PIO block free for exclusive use by the wireless chip driver.

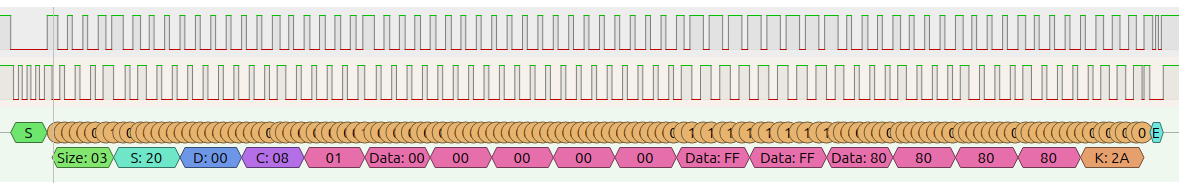

Getting the program swapping to work without disturbing the signal on the Maple data pins took a bit of finessing, made slightly more complicated by the fact that pin control swaps between the PIO, SIO (general GPIO control), and letting the Dreamcast take control. The state of the Maple pins had to be setup in a very specific way otherwise they would briefly change when pin control was changed between different hardware. Even a brief blip would cause issues on the Dreamcast side. But after a bit of trial-and-error, it all seemed to work well in the end.

The basic flow goes like this:

Initialize PIO and load maple_tx

|

Read Maple: unload maple_tx, load maple_rx, start program

|

Parse packet, prepare response packet

|

Write Maple: unload maple_rx, load maple_tx, write packet

|

Immediately loop back to reading as quickly as possible

The whole process does run a bit slower than before, since it takes some time to unload and load PIO programs, but it seems to work quite well. Even on WinCE games like Sega Rally 2, that are particularly annoying to deal with, everything runs as expected.

Marking critical Maple functions as 'no_flash' sped things up markedly as well. Marking functions as 'no_flash' causes the code to be copied to and executed from RAM. Normally code is loaded and executed from flash using the RP2040/2350's execute-in-place (XIP) feature, this can cause delays as code needs to fetched.

All the Small Things

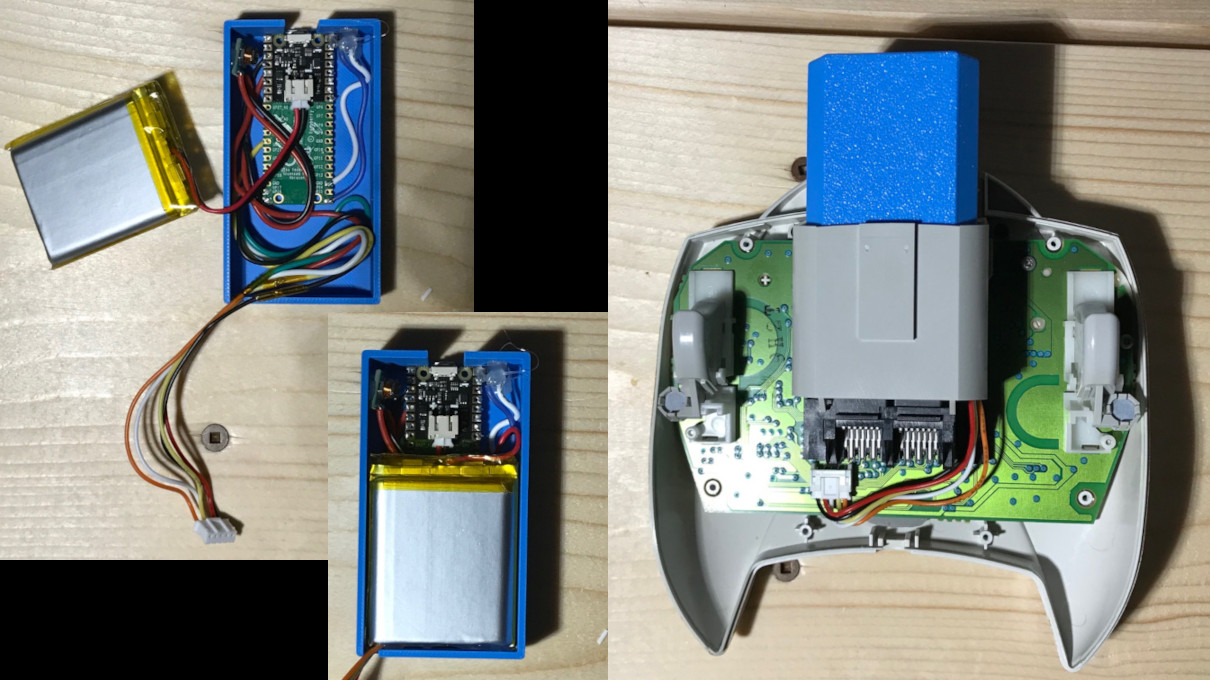

That ended up being pretty much it in terms of major hurdles. The rest of the needed changes were just some housekeeping things like reducing the size of some pre-allocated buffers to make sure everything would fit in RAM. The RP2040 only has around 256KB of RAM (vs the over 512KB on the RP2350), and 128KB of that is taken up for the VMU. But there was still enough for everything else.

One kind of curious bug that cropped up was due to unaligned 32-bit accesses. Some elements in a packed struct were being accessed by first casting it to a uint32_t pointer, but the elements were not 32-bit aligned and this caused the RP2040 to crash. The RP2350 apparently supports such memory accesses but the RP2040 does not. Interesting to see little hardware differences like this.

And that's pretty much it. I hope to have some public builds available soon for folks to try out if they are interested.